Ford Motor to share self-driving vehicles data to spark innovation

Ford Motor has announced that it will share data from its self-driving vehicles to help spark research and development of autonomous vehicles in future.

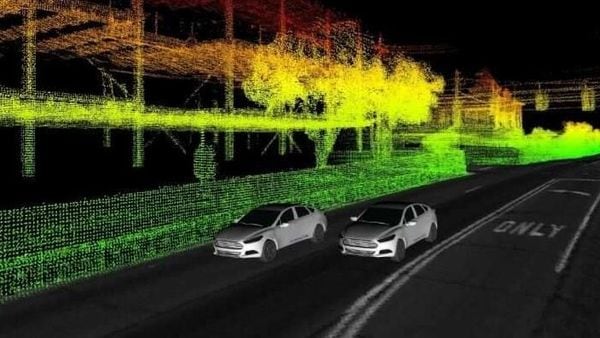

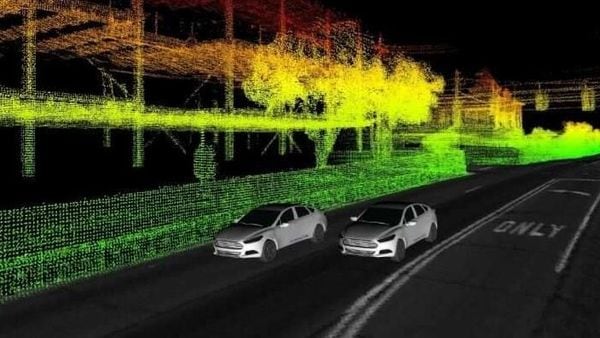

As part of this package, Ford is releasing data from multiple self-driving research vehicles collected over a span of one year — part of advanced research efforts separate from the work we’re doing with Argo AI to develop a production-ready self-driving system. This dataset includes not only LiDAR and camera sensor data, GPS and trajectory information, but also unique elements such as multi-vehicle data and 3D point cloud and ground reflectivity maps. A plug-in is also available that can easily visualise the data, which is offered in the popular ROS format.

Trending Bikes

The dataset spans an entire year and it includes seasonal variations and varied environments throughout Metro Detroit. It features data from sunny, cloudy and snowy days, not to mention freeways, tunnels, residential complexes and neighbourhoods, airports, dense urban areas, construction zones.

Tony Lockwood, Autonomous Vehicle Manager, Virtual Driver Systems, Ford Motor Company has said that, “Most datasets only offer data from a single vehicle, but sensor information from two vehicles can help researchers explore entirely new scenarios, especially when the two encounter each other at different points along their respective routes. Right now, one vehicle has limited “vision" in terms of what it can see; you’ll note in our visualisations that some parts are not coloured in, which is because the vehicle’s sensors could not penetrate those areas. But with multiple vehicles in the same general area, it’s feasible one would detect things the others simply cannot, potentially opening up new routes for multi-vehicle communication, localisation, perception and path planning."

Also Read : This tech helps self-driving cars see through fog, snow, even under the road

The dataset to be shared by Ford will include high-resolution time-stamped data from the vehicles’ four LiDAR and seven cameras that can help researchers explore solving perception problems — improving the ability of a self-driving vehicle to recognise and identify people, places and things in its environment. Precise localisation and ground truth data allows researchers to see exactly how accurate their algorithms are, giving them a baseline for performance they can measure against in their own research.

Apart from 3D point cloud maps from LiDAR, Ford will also share access to high-resolution 3D ground plane reflectivity maps. Together, these maps will help researchers to have a comprehensive understanding of what these self-driving vehicles “see" in the world around them.

155.0 cc

155.0 cc 56.87 kmpl

56.87 kmpl